Tech Notes

Technical Notes About Foyle

Objective:

Describe how we want to use technical notes and establish guidelines on authoring technical notes (TNs) for consistency.

Why TechNotes?

We use TNs communicate key ideas, topics, practices, and decisions for the project. TNs provide a historical record with minimal burden on the authors or readers. We will use TNs in Foyle to document our team engineering practices and technical “stakes-in-the-ground” in a “bite-sized” manner. Use a TN instead of email to communicate important/durable decisions or practices. Follow this up with an email notification to the team when the document is published.

TNs are not meant to replace documents such as User Guides or Detailed Design documents. However, many aspects of such larger docs can be discussed and formalized using a TN to feed into the authoring of these larger docs.

Guidelines

- All TNs have a unique ID that TNxxx where xxx is a sequential number

- Keep TNs brief, clear and to the point.

- Primary author is listed as owner. Secondary authors are recognized as co-creators.

- Allow contributors to add themselves in the contributors section.

- TNs are considered immutable once they are published. They should be deprecated or superceded by new TNs. This provides a historical timeline and prevents guesswork on whether a TN is still relevant.

- Only mark a TN published when ready (and approved, if there is an approver).

- If a TN is deprecated mark it so. If there is a newer version that supersedes it, put the new doc in the front matter.

Happy Tech-Noting

1 - TN001 Logging

Design logging to support capturing human feedback.

Objective

Design logging to support capturing human feedback.

TL;DR

One of the key goals of Foyle is to collect human feedback to improve the quality of the AI.

The key interaction that we want to capture is as follows

- User asks the AI to generate one or more commands

- User edits those commands

- User executes those commands

In particular, we want to be able to link the commands that the AI generated to the commands that the user ultimately

executes. We can do this as follows

- When the AI generates any blocks it attaches a unique block id to each block

- When the frontend makes a request to the backend, the request includes the block ids of the blocks

- The blockid can then be used as a join key to link what the AI produced with what a human ultimately executed

Implementation

Protos

We need to add a new field to the Block proto to store the block id.

message Block {

...

string block_id = 7;

}

As of right now, we don’t attach a block id to the BlockOutput proto because

- We don’t have an immediate need for it

- Since

BlockOutput is a child of a Block it is already linked to an id

Backend

On the backend we can rely on structured logging to log the various steps (e.g. RAG) that go into producing a block.

We can add log statements to link a block id to a traceId so that we can see how that block was generated.

Logging Backend

When it comes to a logging backend the simplest most universal solution is to write the structured logs as JSONL to a

file. The downside of this approach is that this schema wouldn’t be efficient for querying. We could solve that by

choosing a logging backend that supports indexing. For example, SQLite or Google Cloud Logging. I think it will

be simpler to start by appending to a file. Even if we end up adding SQLite it might be advantageous to have a separate

ETL pipeline that reads the JSONL and writes it to SQLite. This way each entry in the SQLite database could potentially

be a trace rather than a log entry.

To support this we need to modify App.SetupLogging to log to the appropriate file.

I don’t think we need to worry about log rotation. I think we can just generate a new timestamped file each time Foyle

starts. In cases where Foyle might be truly long running (e.g. deployed on K8s) we should probably just be logging

to stodut/stderr and relying on existing mechanisms (e.g. Cloud Logging to ingest those logs).

Logging Delete Events

We might also want to log delete block events. If the user deletes an AI generated block that’s a pretty strong signal

that the AI made a mistake; for example providing an overly verbose command. We could log these events by adding

a VSCode handler for the delete event. We could then add an RPC to the backend to log these events. I’ll probably

leave this for a future version.

Finding Mistakes

One of the key goals of logging is to find mistakes. In the case of Foyle, a mistake is where the AI generated block

got edited by the human before being executed. We can create a simple batch job (possibly using Dataflow) to find

these examples. The job could look like the following

- Filter the logs to find entries and emit tuples corresponding to AI generated block (block_id, trace_id, contentents)

and executed blocks (block_id, trace_id, contents)

- Join the two streams on block_id

- Compare the contents of the two blocks if they aren’t the same then it was a mistake

References

Distributed Tracing

2 - TN002 Learning from Human Feedback

Allow Foyle to learn from human feedback.

Objective

Allow Foyle to learn from human feedback.

TL;DR

If a user corrects a command generated by the AI, we want to be able to use that feedback to improve the AI.

The simplest way to do this is using few shot prompting. This tech note focuses on how we will collect and retrieve

the examples to enrich the prompts. At a high level, the process will look like the following

- Process logs to identify examples where the human corrected the AI generated response

- Turn each mistake into one or more examples of a query and response

- Compute and store the embeddings of the query

- Use brute force to compute distance to all embeddings

This initial design prioritizes simplicity and flexibility over performance. For example, we may want to

experiment with using AI to generate alternative versions of a query. For now, we avoid using a vector database

to efficiently store and query a large corpus of documents. I think its premature to optimize for large corpi

given users may not have large corpi.

Generate Documents

The first step of learning from human feedback is to generate examples that can be used to train the AI. We can

think of each example as a tuple (Doc, Blocks) where Doc is the document sent to the AI for completion and

Blocks are the Blocks the AI should return.

We can obtain these examples from our block logs. A good starting point is to look

at where the AI made a mistake; i.e. the human had to edit the command the AI provided. We can obtain these

examples by looking at our BlockLogs and finding logs where the executed cell differed from the generated block.

There’s lots of ways we could store tuples (Doc, Blocks) but the simplest most obvious way is to append the desired

block to Doc.blocks and then serialize the Doc proto as a .foyle file. We can start by assuming that the query

corresponds to all but the last block Doc.blocks[:-1] and the expected answer is the last block Doc.Blocks[-1].

This will break when we want to allow for the AI to respond with more than 1 block. However, to get started this

should be good enough. Since the embeddings will be stored in an auxilary file containing a serialized proto we

could extend that to include information about which blocks to use as the answer.

Embeddings

In Memory + Brute Force

With text-embedding-3-small embeddings

have dimension 1536 and are float32; so 6KB/Embedding. If we have 1000 documents that’s 6MB.

This should easily fit in memory for the near future.

Computation wise computing a dot product against 1000 documents is about 3.1 Million Floating Point Operations(FLOPS).

This is orders of magnitude less than LLAMA2 which clocks in at 1700 Giga Flops.

Given people are running LLAMA2 locally (albeit on GPUs) seems like we should be able to get pretty far with

a brute force approach.

A brute force in memory option would work as follows

- Load all the vector embeddings of documents into memory

- Compute a dot product using matrix multiplication

- Find the K-values with the smallest values

Serializing the embeddings.

To store the embeddings we will use a serialized proto that lives side by side with the file we computed the embeddings

for. As proposed in the previous section we will store the example in the file ${BLOCKLOG}.foyle then its embeddings will live in the file

“${BLOCKLOG}.binpb”. This will contain a serialized proto like the following

message Example {

repeated float32 embedding;

}

We use a proto so that we can potentially enrich the data format over time. For example, we may want to

- Store a hash of the source text so we can determine when to recompute embeddings

- Store additional metadata

- Store multiple embeddings for the same document corresponding to different segmentations

This design makes it easy to add/remove documents from the collection we can

- Add “.foyle” or “.md” documents

- Use globs to match files

- Check if the embeddings already exist

The downside of this approach is likely performance. Opening and deserializing large numbers of files is almost

certainly going to be less efficient then using a format like hdf5

that is optimized for matrices.

Learn command

We can add a command to the Foyle CLI to perform all these steps.

This command will operate in a level based, declarative way. Each time it is invoked it will determine what work

needs to be done and then perform it. If no additional work is needed it will be a null op. This design means we

can run it periodically as a background process so that learning happens automatically.

Here’s how it will work; it will iterate over the log entries in the block logs to identify logs that need to

be processed. We can use a watermark to keep track of processed logs to avoid constantly rereading the entire log

history. For each BlockLog that should be turned into an example we can look for the file {BLOCK_ID}.foyle;

if the file doesn’t exist then we will create it.

Next, we can check that for each {BLOCK_ID}.foyle file there is a corresponding {BLOCK_ID}.embeddings.binpb

file. If it doesn’t exist then we will compute the embeddings.

Discussion

Why Only Mistakes

In the current proposal we only turn mistakes into examples. In principle, we could use examples where the model

got things right as well. The intuition is that if the model is already correctly handling a query; there’s no reason

to include few shot examples. Arguably, those positive examples might end up confusing the AI if we end up retrieving

them rather than examples corresponding to mistakes.

Why duplicate the documents?

The ${BLOCK_ID}.foyle files are likely very similar to the actual .foyle files the user created. An alternative

design would be to just reuse the original .foyle documents. This is problematic for several reasons.

The {BLOCK_ID}.foyle files are best considered internal to Foyle’s self-learning and shouldn’t be directly under

the user’s control. Under the current proposal the {BLOCK_ID}.foyle are generated from logs and represent snapshots

of the user’s documents at specific points in time. If we used the user’s actual files we’d have to worry about

them changing over time and causing problems. Treating them as internal to Foyle also makes it easier to move them

in the future to a different storage backend. It also doesn’t require Foyle to have access to the user’s storage system.

Use a vector DB

Another option would be to use a vector DB. Using a vector database (e.g. Weaviate, Chroma, Pinecone) adds a lot of

complexity. In particular, it creates an additional dependency which could be a barrier for users just interested in

trying Foyle out. Furthermore, a vector DB means we need to think about schemas, indexes, updates, etc… Until

its clear we have sufficient data to benefit from a vector db its not worth introducing.

References

OpenAI Embeddings Are Normalized

3 - TN003 Learning Evaluation

Measure the efficacy of the learning system.

Objective

Measure the efficacy of the learning system.

TL;DR

The key hypothesis of Foyle is that by using implicit human feedback, we can create an AI that automatically learns

about your infrastructure. In TN002_Learning and PR#83

we implemented a very simple learning mechanism based on query dependent few shot prompting. The next step is to

quantiatively evaluate the effectiveness of this system. We’d like to construct an evaluation data set that consists of

queries whose answers depend on private knowledge of your infrastructure. We’d like to compare the performance of

Foyle before and after learning from human feedback. We propose to achieve this as follows

Manually construct an evaluation dataset consisting of queries whose answers depend on private knowledge of your

infrastructure ${(q_0, r_0), …. (q_n, r_n)}$.

For each query $q_i$ in the evaluation dataset, we will generate a command $r’_i$ using the Agent.

Compute an evaluation score using a metric similar to the edit distance

$$

S = \sum_{i=0}^n D(r_i, r’_i)

$$

Compare the scores before and after learning from human feedback.

Learning: What Do We Want To Learn

In the context of DevOps there are different types of learning we can test for. The simplest things are facts.

These are discrete pieces of information that can’t be derived from other information. For example:

Query: “Which cluster is used for development?

Response: “gcloud container clusters describe –region=us-west1 –project=foyle-dev dev”

The fact that your organization is using a GKE cluster in the us-west1 region in project foyle-dev for development

(and not an EKS cluster in us-west1) is something a teammate needs to tell you. In the context of our Agent, what

we want the agent to learn is alternative ways to ask the same question. For example,

“Show me the cluster where dev workloads run” should return the same response.

As a team’s infrastructure grows, they often develop conventions for doing things in a consistent way. A simple

example of this is naming conventions. For example, as the number of docker images grows, most organizations develop

conventions around image tagging; e.g. using live or prod to indicate the production version of an image.

In the context of Foyle, we’d like to evaluate the efficacy with which Foyle learns these conventions from examples.

For example, our training set might include the example

Query: “Show me the latest version of the production image for hydros”

Response: “gcloud artifacts docker images describe us-west1-docker.pkg.dev/foyle-public/images/hydros/hydros:prod”

Our evaluation set might include the example

Query: “Describe the production image for foyle”

Response: “gcloud artifacts docker images describe us-west1-docker.pkg.dev/foyle-public/images/foyle/foyle:prod”

Notably, unlike the previous example, in this case both the query and response are different. In order for the agent

to generalize to images not in the training set, it needs to learn the convention that we use to tag images.

Multi-step

We’d eventually like to be able to support multi-step queries. For example, a user might ask

“Show me the logs for the most recent image build for foyle”. This requires two steps

- Listing the images to find the sha of the most recent image

- Querying for the logs of the image build with that sha

We leave this for future work.

Building an Evaluation Set

We will start by hand crafting our evaluation set.

Our evaluation set will consist of a set of .foyle documents checked into source control in the data

directory. The evaluation data set is specific to an organization and its infrastructure. We will use the infrastructure

of Foyle’s development team as the basis for our evaluation set.

To test Foyle’s ability to learn conventions we will include examples that exercise conventions for non-existent

infrastructure (e.g. images, deployments, etc…). Since they don’t exist, a user wouldn’t actually query for them

so they shouldn’t appear in our logs.

We can automatically classify each examples in the evaluation set as either a memorization or generalization example

based on whether the response matches one of the responses in the training set. We can use the distance metric proposed

below to measure the similarity between the generated command and the actual command.

Evaluating correctness

We can evaluate the correctness of the Agent by comparing the generated commands with the actual commands.

We can use an error metric similar to edit distance. Rather than comparing individual characters, we will compare arguments as a whole.

First we will divide a command into positional and named arguments. For this purpose the command

itself is the first positional argument. For the positional we compute the edit distance but looking at the entirety

of the positional argument. For the named arguments we match arguments by name and count the number of incorrect,

missing, and extra arguments. We can denote this as follows. Let $a$ and $b$ be the sequence of positionsal

arguments for two different commands $r_a$ and $r_b$ that we wish to compare.

$$

a = {a_0, …, a_{m}}

$$

$$

b = {b_0, …, b_{n}}

$$

Then we can define the edit distance between the positional arguments as

$$

distance = D_p(m,n)

$$

$$

D_p(i,0) = \sum_{k=0}^{i} w_{del}(a_k)

$$

$$

D_p(0,j) = \sum_{k=0}^{j} w_{ins}(b_k)

$$

$$

D_p(i, j) = \begin{cases}

D_p(i-1, j-1) & \text{if } a_i = b_j \

min \begin{cases}

D_p(i-1, j) + w_{del}(a_i) \

D_p(i, j-1) + w_{ins}(b_j) \

D_p(i-1, j-1) + w_{sub}(a_i, b_j) \

\end{cases} & \text{if } a[i] \neq b[j]

\end{cases}

$$

Here $w_{del}$, $w_{ins}$, and $w_{sub}$ are weights for deletion, insertion, and substitution respectively. If $w_{del} = w_{ins}$ then the distance is symetric $D(r_a, r_b) = D(r_b, r_a)$.

We can treat named arguments as two dictionaries c and d. We can define the edit distance between the named arguments as follows

$$

K = \text{keys}(c) \cup \text{keys}(d)

$$

$$

D_n = \sum_{k \in K} f(k)

$$

$$

f(k) = \begin{cases}

w_{del}(c[k]) & \text{if } k \notin \text{keys}(d) \

w_{ins}(d[k]) & \text{if } k \notin \text{keys}(c) \

w_{sub}(c[k], d[k]) & \text{if } k \in \text{keys}(c), k \in \text{keys}(d), c[k] \neq d[k] \

0 & \text{otherwise} \

\end{cases}

$$

This definition doesn’t properly account for the fact that named arguments often have a long and short version. We ignore this for now and accept that if one command uses the long version and the other uses the short version, we will count these as errors. In the future, we could try building a dictionary of known commands and normalizing the arguments to a standard form.

This definition also doesn’t account for the fact that many CLIs have subcommands and certain named arguments must appear before or after certain subcommands. The definition above

would treat a named argument as a match as long it appears anywhere in the command.

The total distance between two commands is then

$$

D = D_p + D_n

$$

Guardrails to avoid data leakage

We want to ensure that the evaluation set doesn’t contain any queries that are in the training set. There are two cases we need to consider

- Memorization: Queries are different but command is the same

- These are valid training, evaluation pairs because we expect the command to exactly match even though the queries are different

- Contamination: Queries are the same and commands are the same

We can use the distance metric proposed in the previous section to determine if a command in our training set matches the command in the eval dataset.

We can also use this to automatically classifiy each evaluation example as a memorization or generalization example. If the distance from the command in the evaluation set to the closest command in the training set is less than some threshold, we can classify it as a memorization example. Otherwise we can classify it as a generalization example.

Add an Option to filter out eval

When processing an evaluation dataset we should probably denote in the logs that the request was part of the evaluation set. This way during the learning process we can filter it out to avoid learning our evaluation dataset.

An easy way to do this would be to include a log field eval that is set to true for all evaluation examples. When constructing the logger in Agent.Generate we can set this field to true if the Agent is in eval mode.

We still want the Agent to log the evaluation examples so we can use the logs and traces to evaluate the Agent.

In the future we could potentially set the eval field in the request if we want to use the same server for both training and evaluation. For now, we’d probably run the Agent as a batch job and not start a server.

Alternatives

LLM Evaluation

Using an LLM to evaluate the correctness of generated responses is another option. I’m not sure what advantage this would offer over the proposed metric. The proposed

metric has the advantage that it is interpratable and deteriminstic. LLM scoring would introduce another dimension of complexity; in particular we’d potentially need to calibrarte the LLM to align its scores with human scoring.

4 - TN004 Integration with Runme.Dev

Integrate Foyle’s AI capabilities with runme.dev.

Objective

Understand what it would take to integrate Foyle’s AI capabilities with runme.dev.

TL;DR

runme.dev is an OSS tool for running markdown workflows. At a high level its architecture

is very similar to Foyle’s. It has a frontend based on vscode notebooks which talks to a GoLang server. It has

several critical features that Foyle lacks:

- it supports native renderers which allow for output

to be rendered as rich, interactive widgets in the notebook

(examples)

- it supports streaming the output of commands

- it supports setting environment variables

- it supports redirecting stdout and stderr to files

- markdown is Runme’s native file format

- cell ids are persisted to markdown enabling lineage tracking when serializing to markdown

In short it is a mature and feature complete tool for running Notebooks. Foyle, in contrast, is a bare bones

prototype that has frustrating bugs (e.g. output isn’t scrollable). The

intent of Foyle was always to focus on the AI capabilities. The whole point of using VSCode Notebooks was to leverage

an existing OSS stack rather than building a new one. There’s no reason to build a separate stack if there’s is an

OSS stack that meets our needs.

Furthermore, it looks like the RunMe community has significantly more expertise in building VSCode Extensions

(vscode-awesome-ux and tangle).

This could be a huge boon because it could open the door to exploring better affordances for interacting with the AI.

The current UX in Foyle was designed entirely on minimizing the amount of frontend code.

Runme already has an existing community and users; its always easier to ship features to users than users to features.

For these reasons, integrating Foyle’s AI capabilities with Runme.dev is worth pursuing. Towards that end,

this document tries to identify the work needed for a POC of integrating Foyle’s AI capabilities with Runme.dev.

Building a POC seems like it would be relatively straightforward

- Foyle needs to add a new RPC to generate cells using Runme’s protos

- Runme’s vscode extension needs to support calling Foyle’s GenerateService and inserting the cells

Fortunately, since Runme is already assigning and tracking cell ids, very little needs to change to support

collecting the implicit feedback that Foyle relies on.

The current proposal is designed to minimize the number of changes to either code base. In particular, the current

proposal assumes Foyle would be started as a separate service and Runme would be configurable to call Foyle’s

GenerateService. This should be good enough for experimentation. Since Foyle and Runme are both GoLang gRPC services, building a

single binary is highly feasible but might require come refactoring.

Background

Execution in Runme.Dev

runme.dev uses the

RunnerService

to execute programs in a kernel. Kernels are stateful. Using the RunnerService

the client can create sessions which persist state across program executions.

The Execute method

can then be used to execute a program inside a session or create a new session if none is specified.

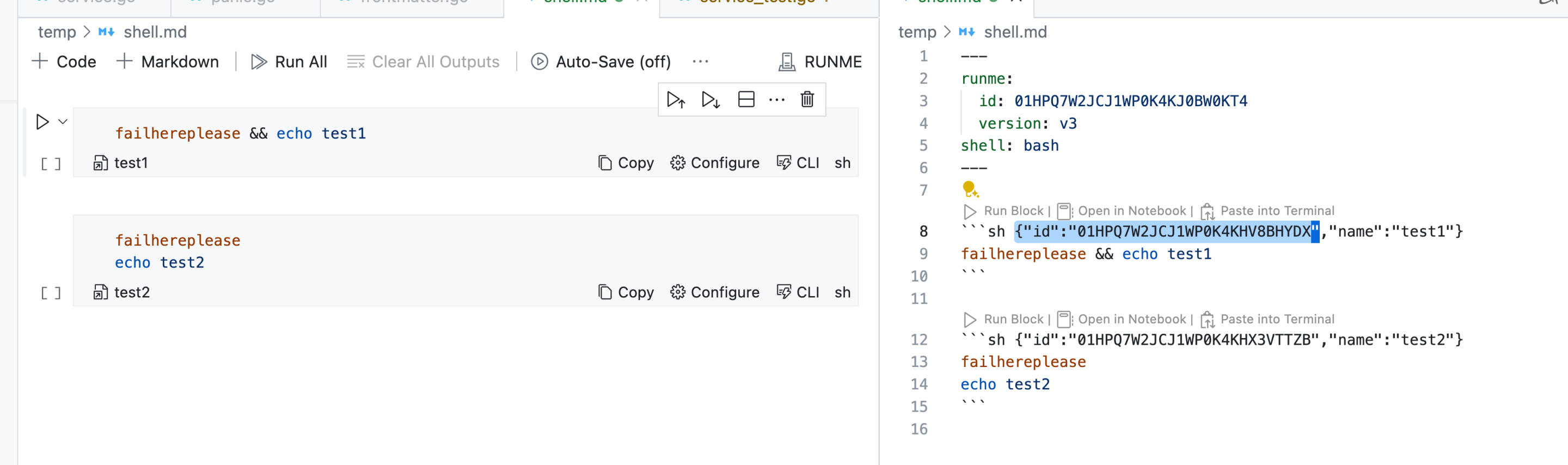

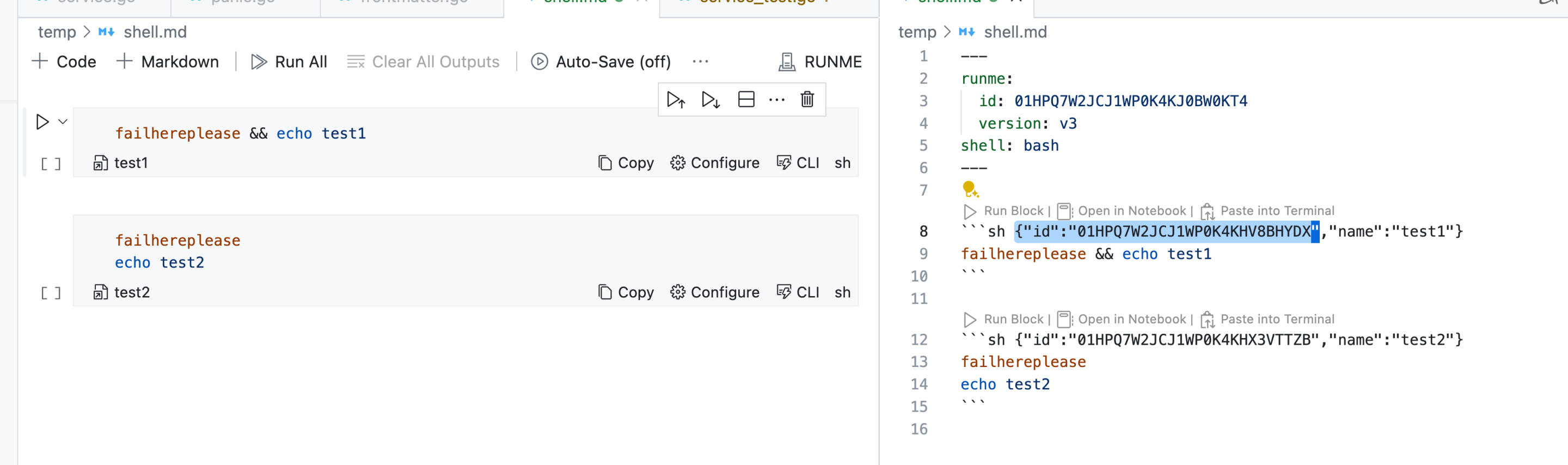

IDs in Runme.Dev

Runme assigns ULIDs to cells. These get encoded in the language annotation of the fenced

block when serializing to markdown.

These ids are automatically passed along in the

known_id

field of the ExecuteRequest.

In the Cell Proto. The id is stored in the metadata field id. When cells are persisted any information in the metadata

will be stored in the language annotation of the fenced code block as illustrated above.

Logging in Runme.Dev

Runme uses Zap

for logging. This is the same logger that Foyle uses.

Foyle Architecture

Foyle can be broken down into two main components

- GRPC Services that are a backend for the VSCode extension - these services are directly in the user request path

- Asynchronous learning - This is a set of asynchronous batch jobs that can be run to improve the AI. At a high level

these jobs are responsible for extracting examples from the logs and then training the AI on those examples.

Foyle has two GRPC services called by the VSCode extension

For the purpose of integrating with Runme.dev only GenerateService matters because we’d be relying on Runme.dev to

execute cells.

Integrating Foyle with Runme.Dev

Foyle Generate Service Changes

Foyle would need to expose a new RPC to generate cells using Runme’s protos.

message RunmeGenerateRequest {

runme.parser.v1.Notebook notebook;

}

message RunmeGenerateResponse {

repeated runme.parser.v1.Cell cells = 1;

}

service RunmeGenerateService {

rpc Generate (RunmeGenerateRequest) returns (RunmeGenerateResponse) {}

}

To implement this RPC we can just convert Runme’s protos to Foyle’s protos and then reuse the existing

implementation.

Since Runme is using ULIDs Foyle could generate a ULID for each cell. It could then log the ULID along with the

block id so we can link ULIDs to cell ids. In principle, we could refactor Foyle to use ULIDs instead of UUIDs.

Ideally, we’d reuse Runme’s ULID Generator

to ensure consistency. That would require a bit of refactoring in Runme to make it accessible because it is currently

private.

Runme Frontend Changes

Runme.dev would need to update its VSCode extension to call the new RunmeGenerateService and insert the cells.

This mostly likely entails adding a VSCode Command and hot key that the user can use to trigger the generation of cells.

Runme would presumably also want to add some configuration options to enable the Foyle plugin and configure the

endpoint.

Runme Logging Changes

Foyle relies on structured logging to track cell execution. Runme uses Zap for logging and uses structured logging

by default (code).

This is probably good enough for a V0 but there might be some changes we want to make in the future. Since the AI’s

ability to improve depends on structured logging to a file; we might want to configure two separate loggers so

a user’s configuration doesn’t interfere with the AI’s ability to learn. Foyle uses a

Zap Tee Core

to route logs to multiple sinks. Runme could potentially adopt the same approach if its needed.

I suspect Runme’s execution code is already instrumented to log the information we need but if not we may need to

add some logging statements.

Changes to Foyle Learning

We’d need to update Foyle’s learning logic to properly extract execution information from Runme’s logs.

Security & CORS

Foyle already supports CORs. So users can already configure CORS in Foyle to accept requests from Runme.dev if its

running in the browser.

Discussion

There are two big differences between how Foyle and Runme work today

- Runme’s Kernel is stateful where as Foyle’s Kernel is stateless

- There is currently no concept of a session in Foyle

- Foyle currently runs vscode entirely in the web; Runme can run vscode in the web but depends on code-server

One potential use case where this comes up is if you want to centrally manage Foyle for an organization. I heard

from one customer that they wanted a centrally deployed webapp so that users didn’t have to install anything

locally. If the frontend is a progressive web app and the kernel is stateless then its easy, cheap, and highly

scalable to deploy this centrally. I’d be curious if this is a deployment configuration that Runme has heard customers

asking for?

References

Runme.dev source links

GRPC Server

Proto definitions

runner/service.go

- Implementation of the runner service

vscode-runme

Appendix: Miscellaneous notes about how runme.dev works

If an execute request doesn’t have a session id, a new session is created code

It uses a pseudo terminal to execute commands code

It looks like the VSCode extension relies on the backend doing serialization via RPC code

however it looks like they are experimenting with possibly using WASM to make it run in the client

NotebookCellManager

5 - TN005 Continuous Log Processing

Continuously compute traces and block logs

Objective

Continuously compute traces and block logs

TL;DR

Right now the user has to explicitly run

foyle log analyze - to compute the aggregated traces and block logsfoyle learn - to learn from the aggregated traces and block logs

This has a number of drawbacks that we’d like to fix jlewi/foyle#84

- Traces aren’t immediately available for debugging and troubleshooting

- User doesn’t immediately and automatically benefit from fixed examples

- Server needs to be stopped to analyze logs and learn because pebble can only be used

from a single process jlewi/foyle#126

We’d like to fix this so that logs are continuously processed and learned from as a background

process in the Foyle application. This technote focuses on the first piece which is continuous log processing.

Background

Foyle Logs

Each time the Foyle server starts it creates a new timestamped log file.

Currently, we only expect one Foyle server to be running at a time.

Thus, at any given time only the latest log file might still be open for additional writes.

RunMe Logs

RunMe launches multiple instances of the RunMe gRPC server. I think its one per vscode workspace.

Each of these instances writes logs to a separate timestamped file.

Currently, we don’t have a good mechanism to detect when a log file has been closed and will recieve no more

writes.

Block Logs

Computing the block logs requires doing two joins

- We first need to join all the log entries by their trace ID

- We then need to key each trace by the block IDs associated with that

- We need to join all the traces related to a block id

File Backed Queue Based Implementations

rstudio/filequeue implements a FIFO using a file per item

and relies on a timestamp in the filename to maintain ordering. It renames files to pop them from the queue.

nsqio/go-diskqueue uses numbered files to implement

a FIFO queue. A metadata file is used to track the position for reading and writing. The library automatically

rolls files when they reach a max size. Writes are asynchronous. Filesystem syncs happen periodically or whenever

a certain number of items have been read or written. If we set the sync count to be one it will sync after every write.

Proposal

Accumulating Log Entries

We can accumulate log entries keyed by their trace ID in our KV store; for the existing local implementation this will be

PebbleDB. In response to a new log entry we can fire an event to trigger a reduce operation on the log entries for that

trace ID. This can in turn trigger a reduce operation on the block logs.

We need to track a watermark for each file so we know what entries have been processed. We can use the following

data structure

// Map from a file to its watermark

type Watermarks map[string]Watermark

type Watermark struct {

// The offset in the file to continue reading from.

Offset int64

}

If a file is longer than the offset then there’s additional data to be processed. We can use

fsnotify to get notified when a file has changed.

We could thus handle this as follows

- Create a FIFO where every event represents a file to be processed

- On startup register a fsnotifier for the directories containing logs

- Fire a sync event for each file when it is modified

- Enqueue an event for each file in the directories

- When processing each file read its watermark to determine where to read from

- Periodically persist the watermarks to disk and persist on shutdownTo make this work need to implement a queue with a watermark.

An in memory FIFO is fine because the durability comes from persisting the watermarks. If the watermarks

are lost we would reprocess some log entries but that is fine. We can reuse Kubernetes

WorkQueue to get “stinginess”; i.e

avoid processing a given file multiple times concurrently and ensuring we only process a given item once if

it is enqued multiple times before it can be processed.

The end of a given trace is marked by specific known log entries e.g.

“returning response”

so we can trigger accumulating a trace when those entries are seen.

The advantage of this approach is we can avoid needing to create another durable, queue to trigger

trace processing because we can just rely on the watermark for the underlying log entry. In effect,

those watermarks will track the completion of updating the traces associated with any log entries up to

the watermark.

We could also use this trace ending messages to trigger garbage collection of the raw log entries in our KV store.

Implementation Details

Responsibility for opening up the pebble databases should move into our application class

- This will allow db references to be passed to any classes that need them

We should define a new DB to store the raw log entries

- We should define a new proto to represent the values

Analyzer should be changed to continuously process the log files

- Create a FIFO for log file events

- Persist watermarks to disk

- Register a fsnotifier for the directories containing logs

BlockLogs

When we perform a reduce operation on the log entries for a trace we can emit an event for any block logs that need to be

updated. We can enqueue these in a durable queue using

nsqio/go-diskqueue.

We need to accumulate (block -> traceIds[]string). We need to avoid multiple writers trying to update

the same block concurrently because that would lead to a last one wins situation.

One option would be to have a single writer for the blocks database. Then we could just use a queue

for different block updates. Downside here is we would be limited to a single thread processing all block updates.

An improved version of the above would be to have multiple writers but ensure a given block can only be processed

by one worker at a time. We can use something like workqueue

for this. Workqueue alone won’t be sufficient because it doesn’t let you attach a payload to the enqueued item. The

enqueued item is used as the key. Therefore we’d need a separate mechanism to keep track of all the updates that

need to be applied to the block.

An obvious place to accumulate updates to each block would be in the blocks database itself. Of course that brings

us back to the problem of ensuring only a single writer to a given block at a time. We’d like to make it easy to

for code to supply a function that will apply an update to a record in the database.

func ReadModifyWrite[T proto.Message](db *pebble.DB, key string, msg T, modify func(T) error) error {

...

}

To make this thread safe we need to ensure that we never try to update the same block concurrently. We can do

that by implementing row level locks. fishy/rowlock is

an example. It is basically, a locked map of row keys to locks. Unfortunately, it doesn’t implement any type of

forgetting so we’d keep accumulating keys. I think we can use the builtin sync.Map

to implement RowLocking with optimistic concurrency. The semantics would be like the following

- Add a ResourceVersion

to the proto that can be used for optimistic locking

- Read the row from the database

- Set ResourceVersion if not already set

- Call LoadOrStore to load or store the resource version in the sync.Map

- If a different value is already stored then restart the operation

- Apply the update

- Generate a new resource version

- Call CompareAndSwap to update the resource version in the sync.Map

- If the value has changed then restart the operation

- Write the updated row to the database

- Call CompareAndDelete to remove the resource version from the sync.Map

Continuous Learning

When it comes to continuous learning we have to potential options

- We could compute any examples for learning as part of processing a blocklog

- We could have a separate queue for learning and add events as a result of processing a blocklog

I think it makes sense to keep learning as a separate step. The learning process will likely evolve over time and

its advantageous if we can redo learning without having to reprocess the logs.

The details will be discussed in a subsequent tech note.

6 - TN006 Continuous Learning

Continuously learn from the traces and block logs

Objective

- Continuously learn from the traces and block logs

TL;DR

TN005 Continuous Log Processing

described how we can continuously process logs. This technote describes how we can continuously learn from the logs.

Background

How Learning Works

TN002 Learning provided the initial design for learning from human feedback.

This was implemented in Learner.Reconcile

as two reconcilers

reconcile.reconcileExamples - Iterates over the blocks database and for each block produces a training example if one is

required

reconcile.reconcileEmbeddings - Iterates over the examples and produces embeddings for each example

Each block currently produces at most 1 training example and the block id and the example ID are the same.

InMemoryDB

implements RAG using an in memory database.

Proposal

We can change the signature of Learner.Reconcile to be

func (l *Learner) Reconcile(ctx context.Context, id string) error

where id is a unique identifier for the block. We can also update Learner to use a workqueue to process blocks

asynchronously.

func (l *Learner) Start(ctx context.Context, events <-chan string, updates chan<-string) error

The learner will listen for events on the event channel and when it receives an event it will enqueue the example

for reconciliation. The updates channel will be used to notify InMemoryDB that it needs to load/reload an example.

For actual implementation we can simplify Learning.reconcileExamples and Learning.reconcileEmbeddings

since we can eliminate the need to iterate over the entire database.

Connecting to the Analyzer

The analyzer needs to emit events when there is a block to be added. We can change the signature of the run function

to be

func (a *Analyzer) Run(ctx context.Context, notifier func(string)error ) error

The notifier function will be called whenever there is a block to be added. Using a function means the Analyzer

doesn’t have to worry about the underlying implementation (e.g. channel, workqueue, etc.).

Backfilling

We can support backfilling by adding a Backfill method to Learner which will iterate over the blocks database.

This is useful if we need to reprocess some blocks because of a bug or because of an error.

func (l *Learner) Backfill(ctx context.Context, startTime time.time) error

We can use startTime to filter down the blocks that get reprocessed. Only those blocks that were modified

after start time will get reprocessed.

InMemoryDB

We’d like to update InMemoryDB whenever an example is added or updated. We can use the updates channel

to notify InMemoryDB that it needs to load/reload an example.

Internally InMemoryDB uses a matrix and an array to store the data

- embeddings stores the embeddings for the examples in a num_examples x num_features.

- embeddings[i, :] is the embedding for examples[i]

We’ll need to add a map from example ID to the row in the embeddings matrix map[string]int. We’ll also

need to protect the data with a mutex to make it thread safe.

7 - TN007 Shared Learning

A solution for sharing learning between multiple users

Objective

- Design a solution for sharing learning between multiple users

TL;DR

The examples used for in context learning are currently stored locally. This makes it awkward to share a trained

AI between team members. Users would have to manually swap the examples in order to benefit from each others learnings.

There are a couple key design decisions

- Do we centralize traces and block log events or only the learned examples?

- Do we support loading examples from a single location or multiple locations?

To simplify management I think we should do the following

- Only move the learned examples to a central location

- Trace/block logs should still be stored locally for each user

- Add support for loading/saving shared examples to multiple locations

Traces and block log events are currently stored in Pebble. Pebble doesn’t have a good story for using shared

storage cockroachdb/pebble#3177.

We also don’t have an immediate need to move the traces and block logs to a central location.

Treating shared storage location as a backup location means Foyle can still operate fine if the shared storage location

is inaccessible.

Proposal

Users should be able to specify

- Multiple additional locations to load examples from

- A backup location to save learned examples to

LearnerConfig Changes

We can update LearnerConfig to support these changes

type LearnerConfig struct {

// SharedExamples is a list of locations to load examples from

SharedExamples []string `json:"sharedExamples,omitempty"`

// BackupExamples is the location to save learned examples to

BackupExamples string `json:"backupExamples,omitempty"`

}

Loading SharedExamples

To support different storage systems (e.g. S3, GCS, local file system) we can define an interface for working

with shared examples. We currently have the FileHelper

interface

type FileHelper interface {

Exists(path string) (bool, error)

NewReader(path string) (io.Reader, error)

NewWriter(path string) (io.Writer, error)

}

Our current implementation of inMemoryDB requires a Glob function

to find all the examples that should be loaded. We should a new interface to include the Glob.

type Globber interface {

Glob(pattern string) ([]string, error)

}

For object storage we can implement Glob by listing all the objects matching a prefix and then applying the glob;

similar to this code for matching a regex

Triggering Loading of SharedExamples

For an initial implementation we can load shared examples when Foyle starts and perhaps periodically poll for

new examples. I don’t think there’s any need to implement push based notifications for new examples.

Alternatives

Centralize Traces and Block Logs

Since Pebble doesn’t have a good story for using shared

storage cockroachdb/pebble#3177 there’s

no simple solution for moving the traces and block logs to a central location.

The main thing we lose by not centralizing the traces and block is the ability to do bulk analysis of traces and

block events across all users. Since we don’t have an immediate use case for that there’s no reason to support it.

References

DuckDB S3 Support

8 - TN008 Auto Insert Cells

Automatically Inserting Suggested Cells

Objective

- Design to have Foyle automatically and continuously generate suggested cells to insert after the current cell

as the user edits a cell in a notebook.

TL;DR

Today Foyle generates a completion when the user explicitly asks for a suggestion by invoking the generate completion

command. The completion results in new cells which are then inserted after the current cell.

We’d like to extend Foyle to automatically and continuously generate suggestions as the user edits a cell in a notebook.

As a user edits the current cell, Foyle will generate one or more suggested cells to insert after the current cell.

This cells will be rendered in a manner that makes it clear they are suggestions that haven’t been accepted or rejected

yet. When the user finishes editing the current cell the suggested cells will be accepted or rejected.

This feature is very similar to how GitHub Copilot AutoComplete works. The key difference is that we won’t try to

autocomplete the current cell.

Not requiring users to explicitly ask for a suggestion should improve the Foyle UX. Right now users have to enter

the intent and then wait while Foyle generates a completion. This interrupts the flow state and requires users to

explicitly think about asking for help. By auto-inserting suggestions we can mask that latency by generating suggestions

as soon as a user starts typing and updating those suggestions as the expand the intent. This should also

create a feedback loop which nudges users to improve the expressiveness of their intents to better steer Foyle.

Since Foyle learns by collecting feedback on completions, auto-generating suggestions increases the opportunity for

learning and should allow Foyle to get smarter faster.

The UX should also be a bigstep towards assisting with intents that require multiple steps to achieve including

ones where later steps are conditional on the output of earlier steps. One way achieve multi-step workflows is to

recursively predict the next action given the original intent and all previous actions and their output.

By auto-inserting cells we create a seamless UX for recursively generating next action predictions; all a user

has to do is accept the suggestion and then execute the code cells; as soon as they do the next action will be automatically

generated.

Motivation: Examples of Multi-Step Workflows

Here are some examples of multi-step workflows.

GitOps Workflows

Suppose we are using GitOps and Flux and want to determine whether the Foo service is up to

date. We can do this as follows. First we can use git to get latest commit of the repository

git ls-remote https://github.com/acme/foo.git

Next we can get the flux kustomization for the Foo service

to see what git commit has been applied

kubectl get kustomization foo -o jsonpath='{.status.lastAppliedRevision}'

Finally we can compare the two commits to see if the service is up to date.

Notably, in this example neither of the commands directly depends on the output of another command. However, this

won’t always be the case. For example, if we wanted to check whether a particular K8s deployment was up to date we

might do the following

- Use

kubectl get deploy to get the flux annotation kustomize.toolkit.fluxcd.io/name to identify the kustomization - Use

kubectl get kustomization to get the last applied revision of the customization and to identify the source

controller - Use

kubectl get gitrepository to get the source repository and its latest commit

In this case the command at each step depends on the output of the previous step.

Troubleshooting Workload Identity and GCP Permissions

Troubleshooting workflows are often multi-step workflows. For example, suppose we are trying to troubleshoot why a

pod on a GKE cluster can’t access a GCS bucket. We might do the following

- Use kubectl to get the deployment to identify the Kubernetes service account (KSA)

- Use kubectl to get the KSA to identify and get the annotation specifying the GCP service account (GSA)

- Use

gcloud policy-troubleshoot iam to check whether the GSA has the necessary permissions to access the GCS bucket

Motivation: Verbose Responses and Chat Models

One of the problems with the existing UX is that since we use Chat models to generate completions the completions

often contain markdown cells before or after the code cells. These cells correspond to the chat model providing additional

exposition or context. For example if we use a cell with the text

Use gcloud to list the buckets

Foyle inserts two cells

A markdown cell

To list the buckets using `gcloud`, you can use the following command:

and then a code cell

gcloud storage buckets list

The markdown cell is redundant with the previous markdown cell and a user would typically delete it. We’d like

to create a better UX where the user can more easily accept the suggested code cell and reject the markdown cell.

UX For Continuous Suggestion

As the user edits one cell (either markdown or code), we’d like Foyle to be continuously running in the background to

generate one or more cells to be inserted after the current cell. This is similar to GitHub Copilot and

[Continue.Dev Autocomplete](https://docs.continue.dev/walkthroughs/tab-autocomplete. An important difference

is that we don’t need Foyle to autocomplete the current cell. This should simplify the problem because

- we have more time to generate completions

- the completion needs to be ready by the time the user is ready to move onto the next cell rather than before

they type the next character

- we can use larger models and longer context windows since we lave a larger latency budget

- we don’t need to check that the completion is still valid after each character is typed into the current cell because we aren’t

trying to auto complete the word(s) and current cell.

If we mimicked ghost text. The experience might look as follows

- User is editing cell N in the notebook

- Foyle is continuously running in the background to generate suggested cells N+1,…, N+K

- The suggested cells N+1,…,N+k are rendered in the notebook in a manner that makes it clear that they are suggestions

that haven’t been accepted or rejected yet

- Following Ghost Text we could use a grayed out font and different border for the cells

- As the user edits cell N, Foyle updates and edits N+1,…,N+K to reflect changes to its suggestions

- The decision to accept or reject the situation is triggered when the user decides to move onto cell N+1

- If the user switches focus to the suggested N+1 cell that’s considered accepting the suggestion

- If the user inserts a new cell that’s considered a rejection

- Each time Foyle generates a new completion it replaces any previous suggestions that haven’t been accepted and inserts

the new suggested cells

- When the notebook is persisted unapproved suggestions are pruned and not saved

Since every notebook cell is just a TextEditor I think we can use the

TextEditorDecoration API

to change how text is rendered in the suggested cells to indicate they are unaccepted suggestions.

We can use Notebook Cell Metadata

to keep track of cells that are associated with a particular suggestion and need to be accepted or rejected. Since

metadata is just a key value store we can add a boolean “unaccepted” to indicate a cell is still waiting on acceptance.

Completion Protocol

Our existingGenerateService

is a unary RPC call. This isn’t ideal for continually generating suggestions as a user updates a block.

We can use the connect protocol to define a full streaming RPC

call for generating suggestions. This will allow the client to stream updates to the doc to the generate

service and the generate service to respond with a stream of completions.

// Generate completions using AI

service GenerateService {

// StreamGenerate is a bidirectional streaming RPC for generating completions

rpc StreamGenerate (stream StreamGenerateRequest) returns (stream StreamGenerateResponse) {}

}

message StreamGenerateRequest {

oneof request {

FullContext full_context = 1;

BlockUpdate update = 2;

}

}

message FullContext {

Doc doc = 1;

int selected = 2;

}

message BlockUpdate {

string block_id = 1;

string block_content = 2;

}

message Finish {

// Indicates whether the completion was accepted or rejected.

bool accepted = 1;

}

message StreamGenerateResponse {

repeated Block blocks = 1;

}

The stream will be initiated by the client sending a FullContext message with the full document and the index

of the current cell that is being edited. Subsequent messages will be BlockUpdates containing the full content

of the current active cell. If its a code cell it will include the outputs if the cell has been executed.

Each StreamGenerateResponse will contain a complete copy of the latest suggestion. This is simpler than

only sending and applying deltas relative to the previous response.

Client Changes

In vscode we can use the

onDidChangeTextDocument

to listen for changes to the a notebook cell (for more detail see appendix). We can handle

this event by initializing a streaming connection to the backend to generate suggestions. On subsequent changes

to the cell we can stream the updated cell contents to the backend to get updated suggestions.

We could use a rate limiting queue (

i.e. similar to workqueue

but implemented in TypeScript) to ensure we don’t send too many requests to the backend. Each time the cell changes

we can enqueue an event and update the current contents of the cell. We can then process the events with rate limiting

enforced. Each time we process an event we’d send the most recent version of the cell contents.

Accepting or Rejecting Suggestions

We need a handler to accept or reject the suggestion. If the suggestion is accepted it would

- Update the metadata on the cells to indicate they are accepted

- Change the font rendering to indicate the cells are no longer suggestions

This could work as follows

- A suggested cell is accepted when the user moves the focus to the suggested cell

- e.g. by using the arrow keys or mouse

- If we find this a noisy signal we could consider requiring additional signals such as a user executes the code cell

or for a markdown cell edits or renders it

- When the document is persisted any unaccepted suggestions would be pruned and not get persisted

- Any time a new completion is generated; any unaccepted cells would be deleted and replaced by the new suggested

cells

Since each cell is a text editor, we can use

onDidChangeActiveTextEditor

to detect when the focus changes to a new cell and check if that cell is a suggestion and accept it if it is.

To prune the suggestions when the document is persisted we can update RunMe’s serializer to filter out any unapproved

cells.

As noted in the motivation section, we’d like to create a better UX for rejecting cells containing verbose markdown

response. With the proposed UX if a user skips over a suggested cell we could use that to reject earlier cells.

For example, suppose cell N+1 contains verbose markdown the user doesn’t want and N+2 contains useful commands.

In this case a user might use the arrow keys to quickly switch the focus to N+2. In this case, since the user

didn’t render or execute N+1 we could interpret that as a rejection of N+1 and remove that cell.

The proposal also means the user would almost always see some suggested cells after the current active cell. Ideally, to avoid

spamming the user with low quality suggestions Foyle would filter out low confidence suggestions and just return

an empty completion. This would trigger the frontend to remove any current suggestions and show nothing.

Ghost Cells In VSCode

We’d like to render cells in VSCode so as to make it apparent they are suggestions. An obvious way to represent

a Ghost Cell would be to render its contents using GhostText; i.e. greyed out text.

In VScode the NotebookDocument and NotebookCell correspond to the in memory data representation of the notebook.

Importantly, these APIs don’t represent the visual representation of the notebook. Each NotebookCell contains

a TextDocument representing the actual contents of the cell.

A TextEditor is the visual representation of a cell. Each TextEditor has a TextDocument. TextEditor.setDecorations

can be used to change how text is rendered in a TextEditor. We can use setDecorations to change how the contents

of a TextEditor are rendered.

TextEditors aren’t guaranteed to exist for all cells in a notebook. A TextEditor is created when a cell becomes visible

and can be destroyed when the cell is no longer visible.

So we can render Ghost cells as follows

- Use metadata in

NotebookCell to indicate that a cell is a suggestion and should be rendered as a GhostCell- This will persist even if the cell isn’t visible

- Use the

onDidChangeVisibleTextEditors to respond whenever a TextEditor is created or becomes vibile - From the

TextEditor passed to the onDidChangeVisibleTextEditors handler get the URI of the TextDocument- This URI should uniquely identify the cell

- Use the URI to find the corresponding

NotebookCell - Determine if the cell is a GhostCell by looking at the metadata of the cell

- If the cell is a GhostCell use

vscode.window.createTextEditorDecorationType to render the cell as a GhostCell

LLM Completions

The backend will generate an LLM completion in response to each StreamGenerateRequest and then return the response

in a StreamGenerateResponse. This is the simplest thing we can do but could be wasteful as we could end up

recomputing the completion even if the cell hasn’t changed sufficiently to alter the completion. In the future,

we could explore more sophisticated strategies for deciding when to compute a new completion. One simple thing

we could do is

- Tokenize the cell contents into words

- Assign each word a score measuring its information content

-log(p(w)) where p(w) is the probability of the word

in the training data. - Generate a new completion each time a word with a score above a threshold is added or removed from the cell.

Multiple completions

Another future direction we could explore is generating multiple completions and then trying to rank them and select

the best one. Alternatively, we could explore a UX that would make it easy to show multiple completions and let the

user select the best one.

Collecting Feedback And Learning

As described in TN002 Learning and in the blog post

Learning, Foyle relies on implicit human feedback to learn from its mistakes.

Currently, Foyle only collects feedback when a user asks for a suggestion. By automatically generating suggestions

we create more opportunities for learning and should hopefully increase Foyle’s learning rate.

We’d like to log when users accept or reject suggestions. We can introduce a new protocol to log events

service LoggingService {/

rpc Log (request LogRequest) returns (response LogResponse) {}

}

message LogRequest{

repeated CellEvent cell_events = 1;

}

enum EventType {

UNKOWN = 0;

ACCEPTED = 1;

REJECTED = 2;

EXECUTED = 3;

}

message CellEvent {

Cell cell = 1;

EventType event_type = 2;

}

message LogResponse {}

When a cell is accepted or rejected we can use the Log RPC to log it to Foyle.

One of the strongest signals we can collect is when the user rejects the completion. In this case, we’d like to log

and learn from whatever code the user ends up manually entering and executing. Right now Foyle relies on

RunMe’s Runner Service

to log cell executions. This method only contains the executed cell and not any preceeding cells. We need those

preceeding cells in order to learn.

Using the proposed logging service we can directly log when a cell is executed and include preceeding cells as

context so we can retrain Foyle to better predict the code cell in the future.

Alternative Designs

Complete the current cell

The current proposal calls for “Auto-Inserting” one or more cells but not autocompleting the current cell. An

alternative would be to do both simultaneously

- Autocomplete the current cell

- Auto-Insert one or more cells after the current cell

One of the reasons for not autocompleting the current cell is because GitHub copilot already does that. Below

is a screen shot illustrating GitHub populating the current cell with Ghost Text containing a possible completion.

More importantly, the problem we want Foyle to turn high level expressions of intent into concrete low

level actions. In this context a user edits a markdown cell to express their intent. The actions are rendered

as code cells that would come next. So by focusing on generating the next code cells we are scoping the problem

to focus on generating the actions to accomplish the intent rather than helping users express their intent.

By focusing on generating the next cell rather than completing the current cell we can tolerate higher latencies

for completion generation than in a traditional autocomplete system. In a traditional autocomplete system you

potentially want to update the completion after each character is typed leading to very tight latency requirements.

In the proposal our latency bounds are determined by how long it takes the user to complete the current cell and

move onto the next cell. We can take advantage of this to use larger models and longer context windows which should

lead to better suggestions.

References

VSCode

How does LLM triggering work in Autocomplete

Typically in autocomplete you are completing the stream of characters a user is typing. So as each character

is added it could invalidate one or more completions. For example if the user enters the character “n” possible

completions are “news…”, “normal…”, etc… When the user enters the next character “ne” we’d like to immediately

update the ranking of completions and reject any that are no longer valid.

One way to handle this is each time you generate a completion you could ask the LLM to generate multiple completions

(e.g. [OpenAI]’s n parameter)(https://platform.openai.com/docs/api-reference/chat/create). These completions

should represent the most likely completions given the current input. Each additional character can then be used

to invalidate any suggestions that don’t match.

This section provides information about how VSCode extensions and notebooks work. It is relevant for figuring

out how to implement the extension changes.

In VSCode notebooks each cell is just a discrete text editor

(discord thread).

VScode’s extension API is defined here.

The onDidChangeTextDocument

fires when the text in a document changes. This could be used to trigger the AI to generate suggestions.

As described in vscode_apis Notebooks have

a data API ((NotebookData, NotebookCellData) and and editor API(NotebookDocument, NotebookCell). NotebookCell contains

a TextDocument which we should be able to use to listen for onDidChangeTextDocument events.

I think you can register a handler that will fire for changes to any TextDocument change and not just for a particular

cell. The document URI should use the vscode-notebook-cell scheme and allow us to identify the notebook document

and cell that changed (code example).

TextDecorations

TextDecorations are properties of TextEditors. Each NotebookCell is a different TextEditor. TextEditors get

created for a NotebookCell when the cell becomes visible and can be destroyed when the cell is deleted.

9 - TN009 Review AI in Notebooks

Review AI in Notebooks

Objective

- Review how other products/projects are creating AI enabled experiences in a notebook UX

TL;DR

There are lots of notebook products and notebook like interfaces. This doc tries to summarize how they are using AI to

enhance the notebook experience. The goal is to identify features and patterns that could

be useful incorporating into Foyle and RunMe.

JupyterLab AI

JupyterLab AI is a project that aims to bring AI

capabilities to JupyterLab. It looks like it introduces a couple ways of interacting with AI’s

The chat feature looks very similar to GitHub copilot chat. Presumably its more aware of the notebook structure.

For example, it lets you select notebook cells and include them in your prompt

(docs).

Chat offers built in RAG. Using the

/learn command you can

inject a bunch of documents into a datastore. These documents are then used to answer questions in the chat using

RAG.

The AI magic commands

let you directly invoke different AI models. The prompt is the text inside the cell. The model output

is then rendered in a new cell. This is useful if your asking the model to produce code because you can then execute

the code in the notebook.

In RunMe you achieve much of the same functionality as the magics by using a CLI like llm

and just executing that in a bash cell.

Compared to what’s proposed in TN008 Autocompleting cells it looks like the inline

completer only autocompletes the current cell; it doesn’t suggest new cells.

JupyterLab AI Links

Hex

Hex magic lets you describe in natural language what you want to do and then it adds the cells to the notebook to do that.

For example, you can describe a graph you want and it will add an SQL cell to select the data

Demo Video.

Notably, from a UX perspective prompting the AI is a “side conversation”. There is a hover window that lets you

ask the AI to do something and then the AI will modify the notebook accordingly. For example, in their

Demo Video you explicitly ask the AI to

“Build a cohort analysis to look at customer churn”. The AI then adds the cells to the notebook.

This is different from an AutoComplete like UX as proposed in TN008 Autocompleting cells.

In an Autocomplete like UX, a user might add a markdown cell containing a heading like “Customer Churn: Cohort Analysis”.

The AI would then autocomplete these by inserting the cells to perform the analysis and render it. The user

wouldn’t have to explicitly prompt the AI.

10 - TN010 Level 1 Evaluation

Level 1 Evaluation

Objective:

Design level 1 evaluation to optimize responses for AutoComplete

TL;DR

As we roll out AutoComplete we are observing

that AI quality is an issue jlewi/foyle#170.

Examples of quality issues we are seeing are

- AI suggests a code cell rather than a markdown cell when a user is editing a markdown cell

- AI splits commands across multiple code cells rather than using a single code cell

We can catch these issues using Level 1 Evals

which are basically assertions applied to the AI responses.

To implement Level 1 Evals we can do the following

- We can generate an evaluation from logs of actual user interactions

- We can create scripts to run the assertions on the AI responses

- We can use RunMe to create playbooks to run evaluations and visualize the results as well as data

Background:

Existing Eval Infrastructure

TN003 described how we could do evaluation given a golden dataset of examples.

The implementation was motivated by a number of factors.

We want a resilient design because batch evaluation is a long running, flaky process because it depends on an external

service (i.e. the LLM provider). Since LLM invocations cost money we want to avoid needlessly recomputing

generations if nothing had changed. Therefore, we want to be able to checkpoint progress and resume from where we left off.

We want to run the same codepaths as in production. Importantly, we want to reuse logging and visualization tooling

so we can inspect evaluation results and understand why the AI succeeded.

To run experiments, we need to modify the code and deploy a new instance of Foyle just for evaluation.

We don’t want the evaluation logs to be mixed with the production logs; in particular we don’t want to learn from

evaluation data.

Evaluation was designed around a controller pattern. The controller figures out which computations need to be run

for each data point and then runs them. Thus, once a data point is processed, rerunning evaluation becomes a null-op.

For example, if an LLM generation has already been computed, we don’t need to recompute it. Each data point

was an instance of the EvalResult Proto. A Pebble Database was used to store the results.

Google Sheets was used for reporting of results.

Experiments were defined using the Experiment Resource via YAML files. An experiment could

be run using the Foyle CLI.

Defining Assertions

As noted in Your AI Product Needs Evals we’d like

to design our assertions so they can be run online and offline.

We can use the following interface to define assertions

type Assertion interface {

Assert(ctx context.Context, doc *v1alpha1.Doc, examples []*v1alpha1.Example, answer []*v1alpha1.Block) (AssertResult, error)

// Name returns the name of the assertion.

Name() string

}

type AssertResult string

AssertPassed AssertResult = "passed"

AssertFailed AssertResult = "failed"

AssertSkipped AssertResult = "skipped"

The Assert method takes a document, examples, and the AI response and returns a triplet indicating whether the assertion passed

or was skipped. Context can be used to pass along the traceId of the actual request.

Online Evaluation

For online execution, we can run the assertions asynchronously in a thread. We can log the assertions using existing logging patterns. This will allow us to fetch the assertion results as part of the trace. Reporting the results should not be the responsibility of each Assertion; we should handle that centrally. We will use OTEL to report the results as well; each

assertion will be added as an attribute to the trace. This will make it easy to monitor performance over time.

Batch Evaluation

For quickly iterating on the AI, we need to be able to do offline, batch evaluation. Following

the existing patterns for evaluation, we can define a new AssertJob resource to run the assertions.

kind: AssertJob

apiVersion: foyle.io/v1alpha1

metadata:

name: "learning"

spec:

sources:

- traceServer:

address: "http://localhost:8080"

- mdFiles:

path: "foyle/evalData"

# Pebble database used to store the results

dbDir: /Users/jlewi/foyle_experiments/20250530-1612/learning

agent: "http://localhost:108080"

sheetID: "1iJbkdUSxEkEX24xMH2NYpxqYcM_7-0koSRuANccDAb8"

sheetName: "WithRAG"

The source field specifies the sources for the evaluation dataset. There are two different kinds of sources. A traceServer

specifies an instance of a Foyle Agent that makes its traces available via an API. This can be used to generate examples

based on actual user interactions. The Traces need to be read via API and not directly from the pebble database because the pebble database is not designed for concurrent access.

The mdFiles field allows examples to be provided as markdown files. This will be used to create a handcrafted curated

dataset of examples.

The Agent field specifies the address of the instance of Foyle to be evaluated. This instance should be configured to store its data in a different location.

The SheetID and SheetName fields specify the Google Sheet where the results will be stored.

To perform the evaluation, we can implement a controller modeled on our existing Evaluator.

Traces Service

We need to introduce a Trace service to allow the evaluation to access traces.

service TracesService {

rpc ListTraces(ListTracesRequest) returns (ListTracesResponse) {

}

}

We’ll need to support filtering and pagination. The most obvious way to filter would be on time range.

A crude way to support time based filtering would be as follows

- Raw log entries are written in timestamp order

- Use the raw logs to read log entries based on time range

- Get the unique set of TraceIDs in that time range

- Look up each trace in the traces database

Initial Assertions

Here are some initial assertions we can define

- If human is editing a markdown cell, suggestion should start with a code cell

- The response should contain one code cell

- Use regexes to check if interactive metadata is set correctly jlewi/foyle#157

- interactive should be false unless the command matches a regex for an interactive command e.g. “kubectl.exec.”, “docker.run.” etc…

- Ensure the AI doesn’t generate any cells for empty input

Reference

Your AI Product Needs Evals Blog post describing the Level 1, 2, and 3 evals.

11 - TN011 Building An Eval Dataset

Building an Eval Dataset

Objective

- Design a solution for autobuilding an evaluation dataset from logs

TL;DR

An evaluation dataset is critical for being able to iterate quickly. Right now

cost is a major concern because Ghost Cells use a lot of tokens due to a naive

implementation. There are a number of experiments we’d like to run to see if

we can reduce cost without significantly impacting quality.

One way to build an evaluation dataset is from logs. With the recent logging

changes, we introduced a concept of sessions. We can use sessions to build an

evaluation dataset.

Sessionization Pipeline

The frontend triggers a new session whenever the active text editor changes.

When the activeEditor changes the frontend sends a LogEvent closing the current

session and starting a new one. The sessionId is included in requests, e.g. StreamGenerate,

using the contextId parameter. Each time a stream is initiated, the initial request

should include the notebook context.

If the active editor is a code cell and it is executed the frontend will send a LogEvent

recording execution.

The streaming log processor can build up sessions containing

We could then use the context as the input and the executed cell as the ground truth data.

We could then experiment by how well we do predicting the executed cell.

How Do We Handle RAG

Foyle learns from feedback. Foyle adaptively builds up a database of example (input, output) pairs

and uses them to prompt the model. The dataset of learned examples will impact the model performance

significantly. When we do evaluation we need to take into account this learning.

Since we are building the evaluation dataset from actual notebooks/prompts there is an ordering to the

examples. During evaluation we can replay the sessions in the same order they occured. We can then let

Foyle adaptively build up its learned examples just as it does in production. Replaying the examples

in order ensures we don’t pollute evaluation by using knowledge of the furture to predict the past.

LLM As Judge

Lots of code cells don’t contain single binary invocations but small minny programs. Therefore, the similarity

metric proposed in TN003 won’t work. We can use LLM as judge to decide whether